Bigquery datagrip10/11/2023 This article explains what an external database adaptor is and how to use one. Once the connection is set up users can use wix-data APIs, display data in Wix Editor elements, use the data to create dynamic pages, and connect it to user input elements. Names of data sources that interact with a database are shown in the Database Explorer with a little green circle. To address such cases, Velo by Wix allows users to connect an external database to their Wix site by using an external database adaptor. When you create a data source, DataGrip connects to a database automatically to receive database objects.Then the connection closes. While CMS collections cover a wide range of use cases for content driven websites and applications, some projects may have very specific requirements that can not be addressed by the integrated database solution. Collections run on secure, shared infrastructure, and are fully managed by Wix. When completing the last step of setting up your BigQuery connection, double. Data collections are globally replicated and have native support for PII encryption and GDPR. This error occurs if you try to connect with a JSON key rather than a P12 key. Jinja templating is used to generate explicit keys for each source file provided by the sourcefiles runtime variable generated by the nodes data source. A file-based data source is used to get the data to be written to BigQuery via the data sink. csv source files into a new BiqQuery table. Use the following steps to prepare DataGrip to access your cluster: Get. The following example bulk inserts data from a number of. Wix Content Management System (CMS) data collections are document-oriented databases, optimized to store and retrieve websites’ content. The Trino JDBC driver and Datagrip 2021.2 work with SEP 354-e or newer. Velo by Wix is a full-stack development platform with integrated databases and backend code capabilities. Additionally the example given is not particularly long or complex so that's no problem.Visit the Velo by Wix website to onboard and continue learning. Regarding scalability, keep in mind that the more flexibility the planner has, that's a good thing too.Įdit: You mention this is MySQL, so views are unlikely to perform that well and CTE's are out of the question. The goal is to make it so that "records aren't showing up" gives you a few very specific places in the query to check (is it getting dropped in a join or filtered out in a where clause?) and so the maintenance team can actually maintain things. This is perfect for apps which need data on query time. Long complex queries work pretty well both from a maintainability and performance case where you keep your where clauses simple, and where you do as much as you can with joins instead of subselects. This saves the data into a centralised postgres database and allows you to query it using graphQL API. Instead, use VIEWs if you can (note if you are on MySQL, views do not perform all that well, but on most other db's they do), and use common table expressions where those don't work (MySQL doesn't support these btw). Long, complex queries in subselects impairs readability and troubleshooting, as do inline views, and both of these should be avoided in long queries. There are tools available like Jetbrains DataGrip which can help connect to teradata via JDBC (in absence of db connector). Due to large number of tables and volumes of data, its not possible to extract data and perform diff operation. Stack Overflow is leveraging AI to summarize the most relevant questions and answers from the community, with the option to ask follow-up questions in a conversational format.

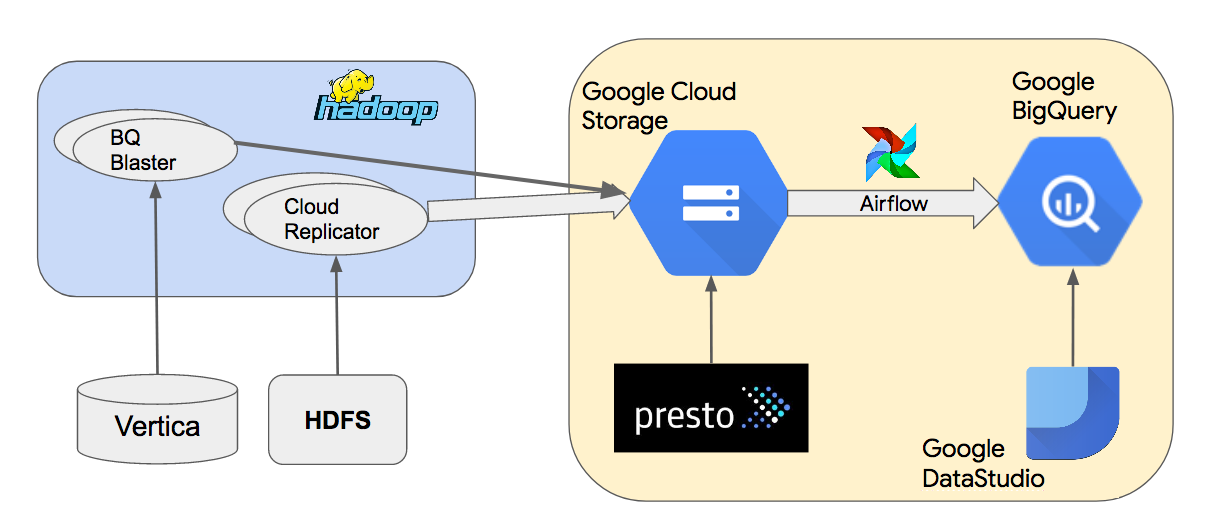

If the client is capable of connecting to BigQuery, it will work with strongDM. strongDM supports DataGrip and other GUI tools. The maintenance problems, IME, come in when the structure of SQL breaks down. We have 2 database - Teradata and BigQuery. Connect BigQuery & Datagrip - BigQuery is a serverless data warehouse that lets you consolidate and analyze data from multiple sources, and Datagrip helps you manage your databases. This is because usually you have a pretty good idea of what kind of problem you are dealing with so there are only a few areas in the query that you have to check. In general, I have found that simple, well-structured SQL to be easy to debug even when a single query goes on for 200+ lines. Are you connecting to your database and then disconnecting immediately (e.g. However, large queries do need to be written with maintainability in mind. Connecting to BigQuery Connecting to ClickHouse Connecting to Firebase.

Performance-wise, these are almost always better because the planner has much more freedom in how to go about retrieving the information. I see these only as problems if they are disorganized. I am going to disagree on large and complicated queries with datagod here.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed